OWASP Top 10 LLM Risks Explained

The OWASP Top 10 for LLM Applications highlights the evolving risks in generative AI systems. Understand the risks and mitigations.

Read moreFind prompt injection, data leakage, unsafe outputs, insecure agent workflows, and other AI-specific risks before attackers do.

Outpost24 helps organizations uncover weaknesses in LLMs, AI-powered applications, and agentic systems through expert-led adversarial testing.

As organizations embed AI into customer-facing applications, internal workflows, and agentic systems, they create a new AI attack surface that requires dedicated adversarial testing.

Our experts map your AI environment, including models, prompts, RAG pipelines, agents, APIs, and connected systems.

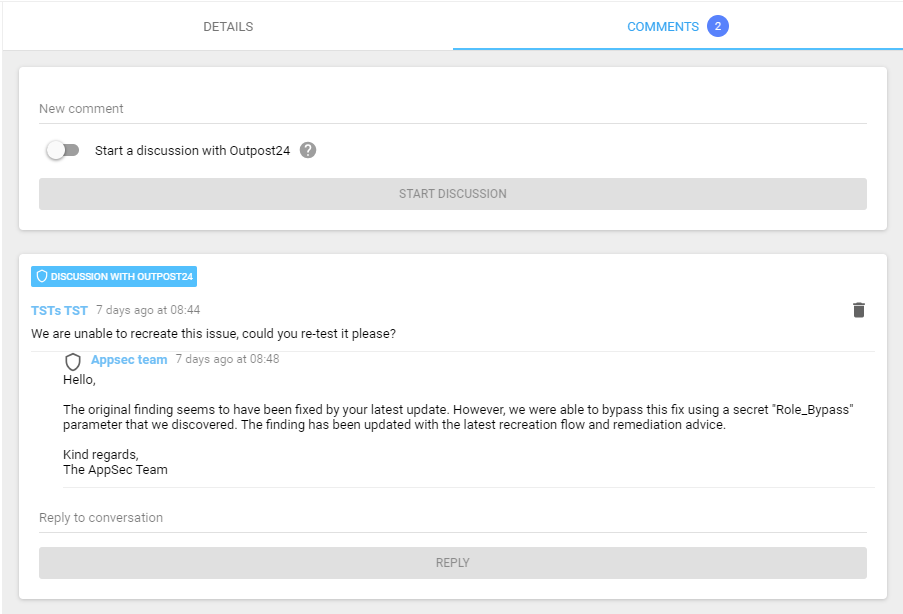

Performed by our certified penetration testers, we simulate real-world attacks against prompts, workflows, permissions, integrations, and data access paths.

Receive prioritized findings aligned to the OWASP Top 10 for LLMs, remediation guidance tailored to AI architectures, and audit-ready reporting.

Simplify your compliance and audit efforts. Outpost24 AI Penetration Testing supports organizations preparing for the EU AI Act and NIST AI RMF.

We test the full AI attack surface, including LLM prompts, guardrails, and system instructions, Retrieval-Augmented Generation (RAG) pipelines, agent workflows and tool use, supporting APIs, interfaces, and integrations, access controls between models and internal systems, sensitive data exposure risks, unsafe outputs and harmful model behavior and the authentication, authorization, and session controls around AI services.

Yes. Testing before launch helps identify vulnerabilities earlier, when they are typically faster and less costly to fix. It also gives your team more confidence before go-live and helps demonstrate security due diligence from day one.

Partially. Our web application assessments include testing of AI-integrated functionality within the application scope. However, they do not cover AI-specific risks at a deeper level, including those related to the underlying model and infrastructure. A dedicated AI/LLM pentest is recommended when AI is a core part of the product. If unsure, bring it up at scoping and the team will advise on the right approach.

Explore additional resources.

Please fill in your information to get in touch with our security experts. All fields are mandatory.

Check our latest research, blogs, and best practices to level-up your cybersecurity program.

The OWASP Top 10 for LLM Applications highlights the evolving risks in generative AI systems. Understand the risks and mitigations.

Read more

As application vulnerabilities build and threaten security, learn how combining EASM and pentesting can proactively secure your organization.

Read more

Prompt injection attacks are a growing risk in LLMs. Understand how they work and reduce the risk of this LLM-specific vulnerability.

Read more