16 Apr 2026

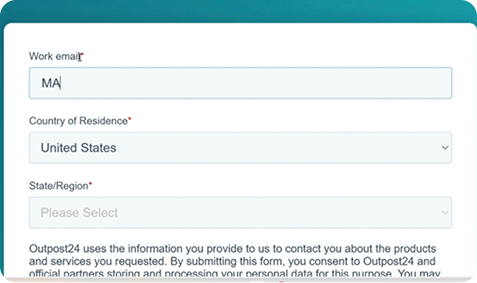

Outpost24 has launched a new AI & LLM penetration testing solution to help organizations securely test their AI and LLM...

14 Apr 2026

Today is Microsoft Patch Tuesday for April 2026. There are 165 vulnerabilities that have been addressed this time around. This...

10 Apr 2026

Learn how Outpost24, as a PCI ASV (Approved Scanning Vendor), helps organizations comply with the latest PCI DSS standards.

16 Mar 2026

Understand how an AI agent hacked McKinsey’s internal AI platform ‘Lilli’, and the lessons organizations should take from this exercise.

16 Mar 2026

Understand the link between evolving cyber threats and the vulnerabilities enabling their success, plus practical advice to close the gaps.